This morning I decided to try giving OpenAi’s ChatGPT Plus Code Interpreter plugin a little test. This is a fairly unsophisticated use case, but in my experience, that’s been the better way to start learning about the limitations of the NLMs I’ve been experimenting with over the past few months.

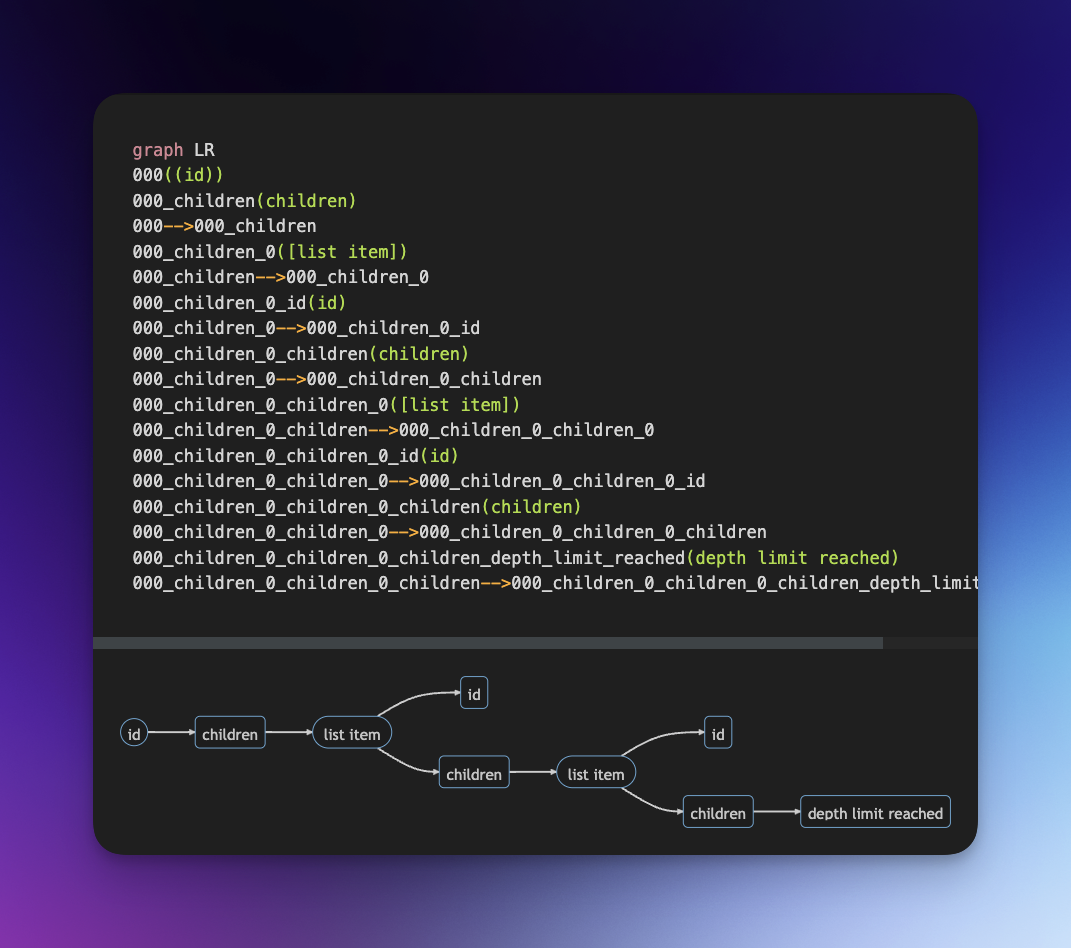

I asked it to “output the data structure of a JSON file in Mermaid so that I can visualize it”. While some tools already do this (or better), I thought it would be interesting to see how it approached the task.

One thing I noticed immediately is the little dropdown that says Show work

If you click on that, it opens up a panel to show you the code it’s writing to fulfill your request. Neat!

This is especially useful if you actually have an idea of what the code should look like. You always have the chance to stop it if it seems like it’s headed in the wrong direction.

I intentionally gave it a rather large JSON file to try and test the limits, at least in one dimension, and I was pleasantly surprised to receive a constructive message after it had already been working for a moment.

It seems that the code took too long to execute, possibly due to the size or complexity of the JSON data. To resolve this issue, I’ll add a depth limit to the recursion and try again. If the depth limit is exceeded, I’ll add a note to the Mermaid diagram indicating that the depth limit was reached.

Please let me know what depth limit you would like to set for visualizing the JSON structure. A depth limit of 2 or 3 is usually a good starting point.

This is useful because it doesn’t just produce an error and then leave you to deal with it; instead, it provides the user with a simple solution that it’s ready to implement. It’s even proactive about choices, saying “I’ll do” instead of asking if you want it to.

I asked it to give me a depth limit of 3, and it quickly modified the initial Python code it wrote, adding a new depth limit to the function.

As easy as that, I was given some usable output.

You may recall that I had asked to have the “data structure of a JSON file in Mermaid”. I think I could have been more explicit that I was only concerned with the structure. Well, Code Interpreter was nice enough to stop the output for me and had this to say:

You can use this Mermaid markup in a Mermaid-compatible tool to visualize the JSON data structure. Please note that this is just a portion of the Mermaid markup. The full markup is quite long, so I am unable to display it in its entirety here. However, I can provide you with a text file containing the complete Mermaid markup. Would you like me to do that?

Once again, useful output! I’m getting the impression that this will be a really helpful tool for me going forward.

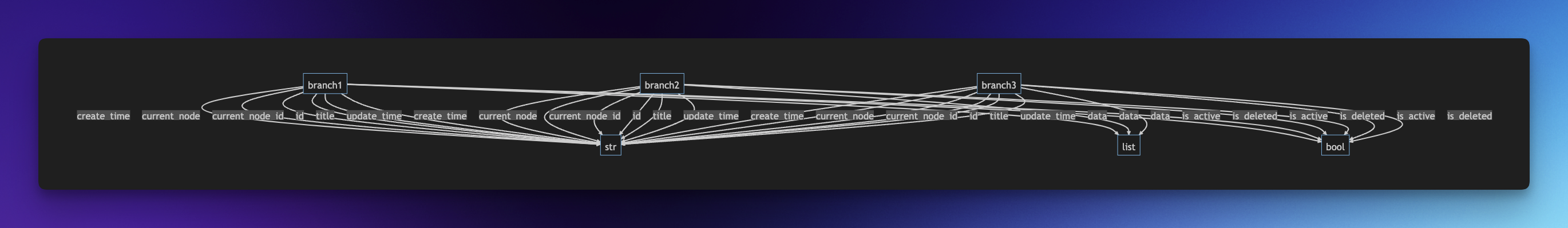

So, naturally, I requested the text file of the full markup, and, it turned out to be huge. Granted, the source JSON file I had provided Code Interpreter with was about 7.4 MB, but the Mermaid output ended up being about 10.5, and since I didn’t need the data it contained, and was really only interested in the data structure, I asked it to help me out with that.

Here’s where things start to get a bit more interesting.

To spare you from a 2000-word article, I’ve left out a lot of the additional details, but here’s the essence of it:

Chris Cameron

File output is large due to dynamically generated object names. How can we summarize the structure while eliminating repetitive branches?

Code Interpreter

To streamline the structure and remove repetitive branches, consider these options:

- Group repetitive structures: Combine branches or objects with the same format and indicate occurrence count.

- Collapse lists with homogeneous items: Simplify lists containing structurally similar items and add an annotation.

- Simplify keys in dictionaries: Replace dynamically generated keys (e.g., UUIDs) with a placeholder (e.g., “{key}”).

Let’s see examples of how to implement each of these options:

It went on to provide the full code output for each of the 3 options, and then prompted me to choose which of the 3 (or all) I would like to try. I find the way in which it interacts very natural, and much more similar to speaking to someone well-versed in programming conventions than the types of exchanges I’ve had with ChatGPT (3.5 and 4).

In the end, this was a great test that proved to be more interesting than I had expected. I’m keen to put it to work solving some more complex issues, but for now, I thought this was worth sharing, especially since I’m still using it in the Alpha preview.

If you’re looking forward to hearing more about it, or have any suggestions for some more complex problems you think I should throw at it, drop me a line and let me know!

And for anyone still reading, all the way down here, I give you the beautiful Mermaid you’ve all been waiting for!

Ok, maybe not beautiful…But it is Mermaid. So, at the end of the day, I got what I asked for.

🧜🏼♀️🤓